Figure

Description

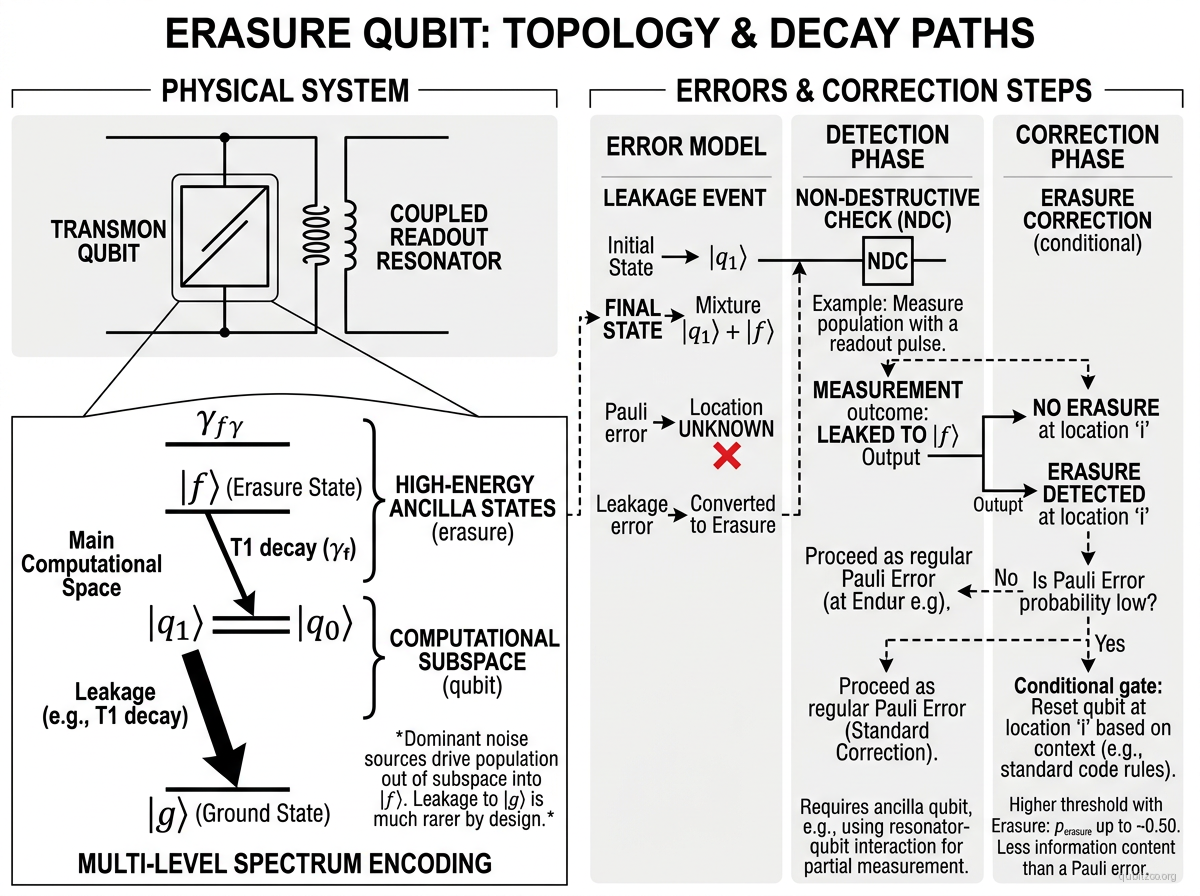

An erasure qubit is a qubit encoding paradigm in which the dominant physical errors are converted into detectable erasure errors — the qubit leaks to a known non-computational state that can be identified by a non-destructive check, revealing the location (but not the content) of the error. The key insight, established by the quantum erasure channel theory, is that an error whose location is known costs dramatically less to correct than a Pauli error at an unknown location: the threshold error rate for erasure errors in the surface code is approximately , compared to for depolarizing noise. This 2–10× reduction in overhead motivates engineering qubits where the dominant failure mode is erasure.

Erasure qubits have been demonstrated across multiple platforms:

Neutral atoms (alkaline earth): In optical tweezer arrays, the metastable clock state and ground state encode the qubit, while Rydberg-mediated gate errors predominantly result in atom loss — a detectable erasure (Wu et al. 2022, Ma et al. 2023).

Dual-rail superconducting: Two coupled transmons or cavities encode and ; photon loss sends the system to , a detectable erasure outside the code space (Levine et al. 2024).

Trapped ions: Metastable shelving states can convert decay errors to detectable leakage events.

Hamiltonian

The erasure qubit is an encoding paradigm, not a single physical system. The general structure is a logical qubit encoded in a subspace such that the dominant error channel maps to an orthogonal, detectable subspace :

where the Lindblad operators satisfy , meaning errors always leave the code space and can be detected by measuring the projector .

For the dual-rail superconducting encoding, the effective Hamiltonian is:

with the code space spanned by and the dominant error (single photon loss) producing the detectable state .

Motivation

Quantum error correction overhead is dominated by the rate and type of physical errors. Unheralded Pauli errors require physical qubits per logical qubit with code distance determined by . Erasure errors, because their locations are known, effectively double the code distance for free — the same code corrects erasures vs. Pauli errors. Converting dominant errors to erasures can reduce the physical-to-logical qubit ratio by factors of 3–10×, potentially bringing practical fault-tolerant computing to nearer-term hardware scales.

Experimental Status

Erasure conversion theory — Stace, Barrett, and Doherty (2009):

- Established that the surface code threshold for erasure errors is , compared to for depolarizing noise.

Neutral atom erasure — Wu et al. (2022):

- Proposed erasure conversion for alkaline earth Rydberg atom arrays using the metastable clock state.

- Theoretical framework for converting dominant Rydberg gate errors to detectable erasures.

High-fidelity Rydberg erasure — Scholl et al. (2023):

- Demonstrated erasure conversion in tweezer arrays with erasure detection efficiency.

- Mid-circuit erasure detection compatible with real-time decoding.

Dual-rail superconducting — Levine et al. (2024):

- Demonstrated long-coherence dual-rail erasure qubit using tunable transmons.

- Achieved erasure fractions of total errors.

Key Metrics

| Metric | Value | Notes | Fidelity reference |

|---|---|---|---|

| Erasure fraction | >99% | Fraction of total errors that are detectable erasures | Levine et al. 2024 |

| Erasure detection efficiency | >98% | Probability of detecting an erasure event | Scholl et al. 2023 |

| Surface code erasure threshold | ~50% | Vs. ~1% for depolarizing Pauli errors | Stace et al. 2009 |

| QEC overhead reduction | 3–10× | Compared to equivalent unheralded error rate | — |

| 2Q gate fidelity (erasure-converted) | 99.0–99.5% | After post-selecting on no erasure detected | Scholl et al. 2023 |

| Residual Pauli error rate | 0.1–0.5% | Errors that are NOT converted to erasure | Levine et al. 2024 |

References

Theory

- Y. Wu et al., “Erasure conversion for fault-tolerant quantum computing in alkaline earth Rydberg atom arrays,” Phys. Rev. A 105, 062418 (2022) — arXiv:2201.03540

- T. M. Stace, S. D. Barrett, and A. C. Doherty, “Thresholds for Topological Codes in the Presence of Loss,” Phys. Rev. Lett. 102, 200501 (2009)

Experimental demonstrations

- P. Scholl et al., “Erasure conversion in a high-fidelity Rydberg quantum simulator,” Nature 622, 273 (2023)

- H. Levine et al., “Demonstrating a Long-Coherence Dual-Rail Erasure Qubit Using Tunable Transmons,” Phys. Rev. X 14, 011051 (2024) — arXiv:2307.08737

Linked Papers

Evergreen context

- erasure-error-vs-pauli-error — the decoder-level reason erasure-biased architectures buy disproportionate logical overhead relief

- threshold-theorem — connects the physical erasure hierarchy back to the central below-threshold scaling question

- quantum-hardware — helpful umbrella when comparing which hardware stacks can realistically turn dominant faults into flagged leakage instead of hidden Pauli errors

Related Entries

- dual-rail-photonic-qubit — photonic dual-rail encoding, a natural erasure architecture

- dual-rail-superconducting-qubit — superconducting implementation of dual-rail erasure

- alkaline-earth-neutral-atom-clock-qubit — provides the metastable state encoding for atom-based erasure

- surface-code-logical-qubit — primary QEC code benefiting from erasure conversion

- kerr-cat-qubit — complementary approach using noise bias rather than erasure conversion

- transmon — baseline superconducting qubit that erasure schemes aim to improve upon